From lithography machines to medical instruments, real-world edge systems demand deterministic behaviour, reliable sensor interpretation, and carefully engineered algorithms that go far beyond simply training an AI model.

Artificial Intelligence or AI has been glorified as the future of automation, often portrayed as the ultimate solution for efficiency, decision-making, and innovation across industries. It is marketed as a transformative technology for everything from healthcare and finance to autonomous systems and industrial processes.

In practice, this narrative does not reflect present reality, as AI in its current form remains too limited to be relied upon for mission-critical applications that require deterministic behaviour, such as those stipulated by ISO/IEC standards. Although AI performs impressively in controlled settings, it often struggles when exposed to the complexity, variability and unpredictability of real-world environments — particularly when those conditions fall outside the assumptions used to train the model.

This degradation in performance occurs because AI lacks common sense reasoning and struggles with real-world subtlety, i.e. it does not understand the real world in the same way that humans do. Trained largely on synthetic or otherwise limited datasets, it has no intrinsic grasp of the physical or situational subtleties present in operational environments. When real-world conditions differ from those seen during training, AI systems often misinterpret context, leading to unreliable or misleading outcomes — an unacceptable limitation for any mission-critical application.

What is a mission-critical application?

An application is classed as mission-critical when results must be delivered predictably, repeatably and within guaranteed timing constraints defined by system and stakeholder requirements. In many operational environments, unreliable or delayed behaviour can lead directly to financial loss, disrupted processes or regulatory non-compliance. In embedded edge systems (e.g. semiconductor lithography machines, factory automation, medical instruments and logistics automation), algorithms interact directly with physical processes under strict constraints such as bounded latency, limited computational resources, low power consumption and long-term reliability, while often needing to comply with IEC/ISO standards.

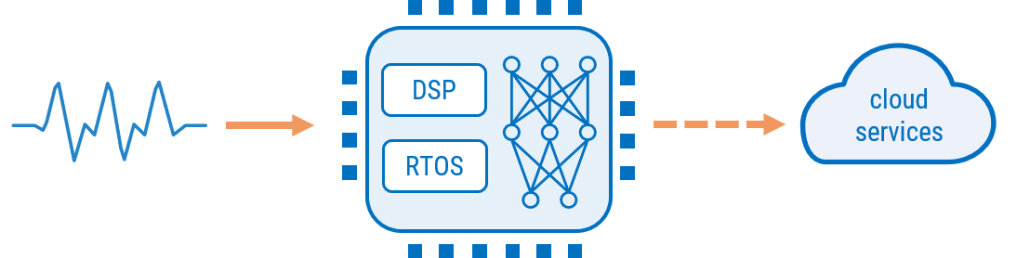

At the heart of these systems are physical measurements, i.e. sensors that produce signals that reflect real-world processes, but are often corrupted by noise, interference and environmental disturbances. This broader challenge is increasingly discussed under the banner of Physical AI — intelligent systems that perceive, reason and act within the physical world through sensors, signals and real-time control. Achieving this in practice requires the integration of deterministic digital signal processing (DSP) algorithms, machine learning (ML) and embedded systems design, forming the basis of Real-Time Edge Intelligence (RTEI).

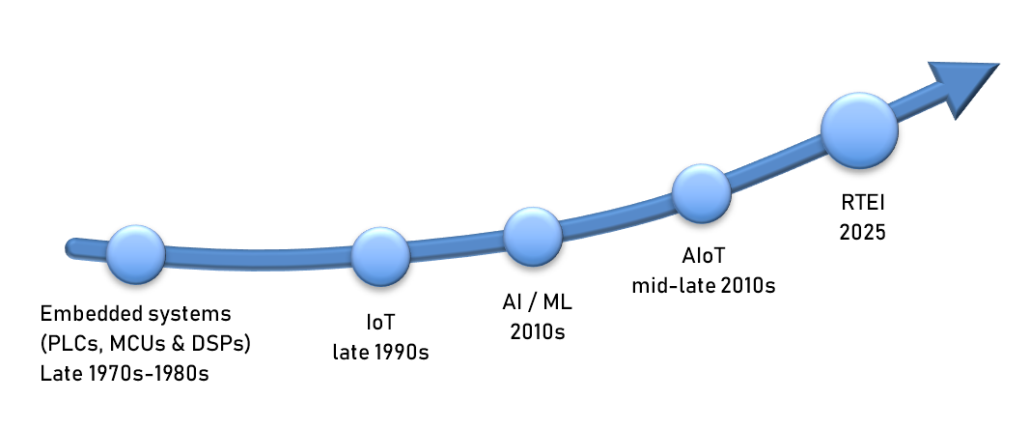

Intelligent machines existed long before AI

The idea of embedding intelligence into machines predates modern AI by decades. Early automation systems implemented rules‑based behaviour using mechanical logic and later electromechanical control systems. However, with the development of electronics, these concepts evolved into embedded systems built on PLCs, microcontrollers (MCUs) and later digital signal processors (DSPs), allowing complex signal analysis and control algorithms to be implemented in software while maintaining robust deterministic behaviour.

The rise of IoT

As sensing technologies became cheaper and connectivity expanded, embedded devices increasingly became networked, leading to the Internet of Things (IoT). In many architectures, devices primarily act as data forwarders, streaming sensor measurements to cloud infrastructure where ML models perform analysis.

While this approach enables powerful functionality, it also introduces limitations such as network latency, bandwidth consumption and dependence on continuous connectivity. For many real‑world systems, intelligence must therefore move closer to the data source.

The challenge of real‑world sensor data

Real-world sensor data are rarely clean, as the signals are affected by measurement noise, powerline interference and environmental disturbances. As such, extracting meaningful information from these measurements has traditionally relied on signal processing techniques, implemented either using analog electronics or digitally using a DSP or microcontroller. These techniques perform tasks such as filtering, spectral analysis and feature extraction. Filtering removes noise, spectral analysis reveals hidden patterns and feature extraction transforms raw measurements into structured information describing the underlying system behaviour. These deterministic methods make signal interpretation predictable and verifiable.

The Evolution from Edge AI to RTEI

ML models operate most effectively on a few well‑designed features based on the physics of the sensor data. In order to facilitate this, DSP algorithms (designed using human intelligence) are a fundamental pre-step for signal enhancement and feature extraction, such that the ML model can perform its classification task based on high-quality feature data. It is this combination that can provide high classification accuracy, even when conditions slightly deviate from the original training datasets.

As such, engineers are increasingly adopting architectures in which DSP, ML and the embedded computing platform (e.g., Arm Cortex processors with hardware support for DSP and ML workloads) are developed together, while remaining aligned with stakeholder requirements and relevant IEC/ISO compliance standards. This augmented approach improves reliability while ensuring long-term robustness across a wide range of edge applications.

RTEI Solutions Handbook

The architectural principles behind Real-Time Edge Intelligence (RTEI), including practical DSP techniques, embedded implementation strategies and real-world case studies, are explored in detail in RTEI solutions handbook, which may be purchased directly from ASN.