Besides delivery, drones are already used as an ‘eye in the sky’. Or, with a ultrawide band radar attached to the drone, you can fly the drone wherever you want, maybe land the drone and start measuring. For instance:

- It helps farmer to get higher yields by giving them a literal overview which spots on their field are developing well and which spots are perfoming less. So that farmers can take action on the lower performing spots.

- Drones have excellent use for finding spots for roads, waterworks, energy fields and other infrastructure.

- Drones give a real-time situation overview. As an example: an overview of road congestion to aid the city council to take proper action

- They can measure while covering large areas. For instance: a large crop field where only the first crops from the road are visible for a farmer. Furthermore, they have the advantage that big areas can be captured in one glance

- At places where humans have difficulty or is dangerous to reach. Think about places in the jungle or large mountainous regions. But also: an aid in building and maintenance of buildings and constructions like large building sites, bridges and high towers. Or when action on dangerous gasses is needed. And maybe, drones can become an aid to perform reparations and make installations themselves

- For better and worse, drones can also be used for guarding assets. With sensors, they can guard areas by looking for movement, and establish a protected zone. Unfortunately, the technology is also available for terrorists, who will also find a use for drones for maximising chaos

Privacy, Safety and Security

Especially in crowded areas, privacy is a big issuse. A big complaint is the noise that drones produce: in a 2017 study, NASA found out that people find the noise of drones more annoying than the sound of ‘normal’ traffic. Besides noise, privacy has another factor: the camera. Besides that, drones may fly unasked over your property, what do they register exactly? What if you don’t want to be filmed in your garden?

Another practical problem is the risk that a drone can drop its cargo. Or that it can fall out of air itself. Amazon is already experimenting with a self-destroying drone when the drone risks crashing. In crowded areas, the risk of damage or even worse: hitting someone can’t be overlooked.

For acceptance of the use of drones, these challenges have to be resolved for getting trust and acceptance. Legislation is expected to come in to regulate drone traffic.

Security

As anyone can and will buy a drone, security is another issue. Anyone can just buy one online or even in a toystore and fly with it anywhere they want. The annoying noise of a drone in natural parks might be a inconvenience. But of course, more harmful use of drones might take place as well, for instance when used by terrorists, who can use drones for unwanted inspection and creating chaos. But also: you can load anything on your drone, fly to your destination and place it into action. Governments can forbid the flying of drones in the proximity of (for instance) a nuclear powerplant, but how do you prevent someone actually flying it there? Where a lot of questions of drone-flying have some potential answers, this one is still unsolved.

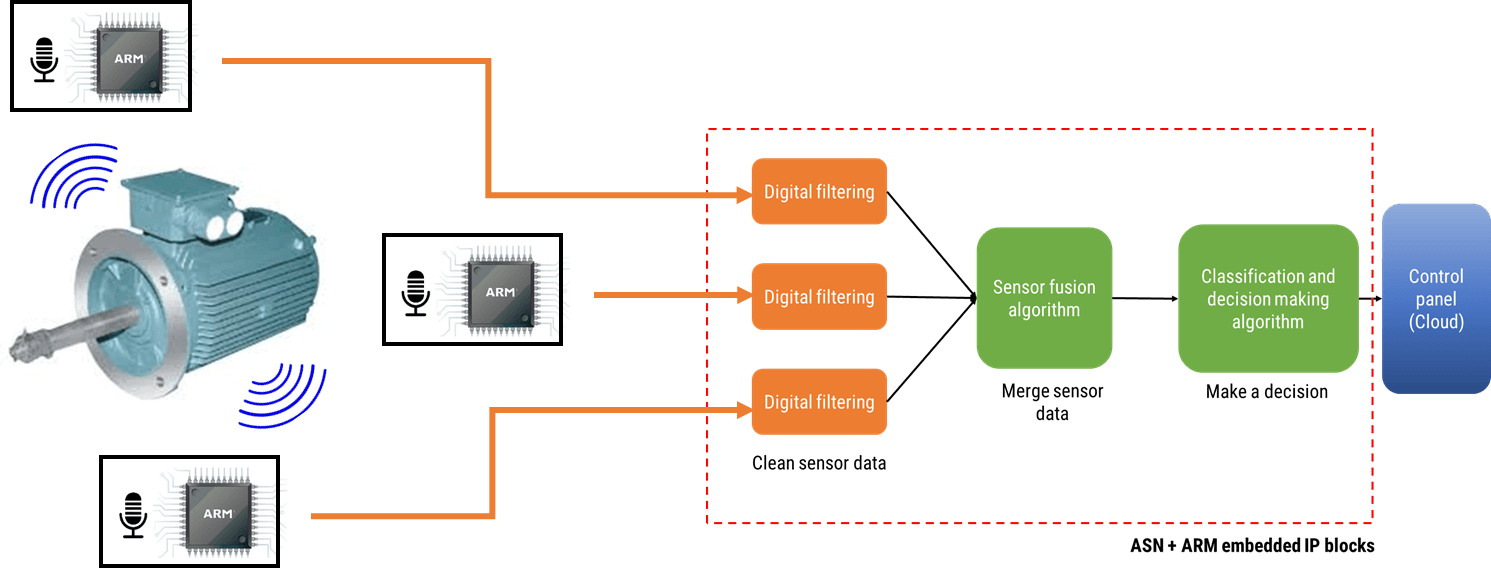

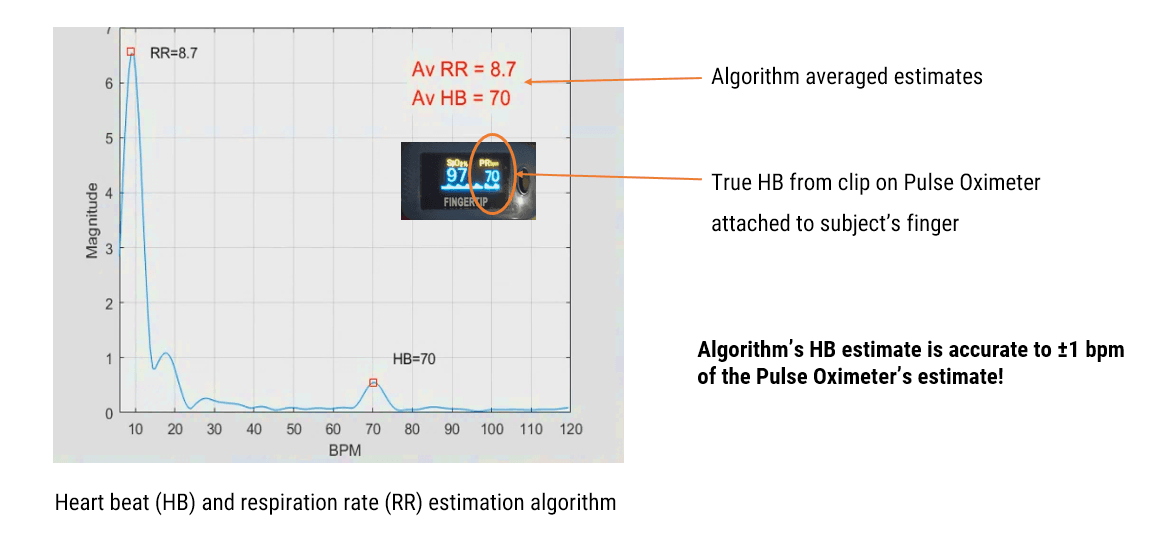

In all cases, sensors play a pivotal role in solving the technical questions. ASN Filter Designer can help with sensor measurement with real-time feedback and the powerful signal analyzer. How? Look at ASN Filter Designer or mail our consultancy service at: designs@advsolned.com

Do you agree with this list? Do you have other suggestions? Please let us know!