Posts

DSP for engineers: the ASN Filter Designer is the ideal tool to analyze and filter the sensor data quickly. Create an algorithm within hours instead of days. When you are working with sensor data, you probably recognize these challenges:

- My sensor data signals are too weak to even make an analysis. So, strengthening of the signals is needed

- Where I would expect a flat line, the data looks like a mess because of interference and other containments. I need to clean the data first before analysis

Until now, you’ve probably spent days or even weeks working on your signal analysis and filtering? The development trajectory is generally slow and very painful.

In fact, just think about the number of hours that you could have saved if you had design tool that managed all of the algorithmic details for you. ASN Filter Designer is an industry standard solution used by thousands of professional developers worldwide working on IoT projects.

Our close collaboration with Arm and ST ensures that all designed filters are 100% compatible with all Arm Cortex-M processors, such as ST’s popular STM32 family.

Challenges for engineers

- 90% of IoT smart sensors are based on Arm Cortex-M processor technology

- Sensor signal processing is difficult

- Sensors have trouble with all kinds of interference and undesirable components

- How do I design a filter that meets my requirements?

- How can I verify my designed filter on test data?

- Clean sensor data is required for better product performance

- Time consuming process to implement a filter on an embedded processor

- Time is money!

Designers hit a ‘brick wall’ with traditional tooling. Standard tooling requires an iterative, trial and error approach or expert knowledge. Using this approach, a considerable amount of valuable engineering time is wasted. ASN Filter Designer helps you with an interactive method of design, whereby the tool automatically enters the technical specifications based on the graphical user requirements.

Fast DSP algorithm development

- Fully validated filter design: suitable for deployment in DSP, micro-controller, FPGA, ASIC or PC application.

- Automatic detailed design documentation: expediting peer review and lowing project risks by helping the designer create a paper trail.

- Simple handover: project file, documentation and test results provide a painless route for handover to colleagues or other teams.

- Easily accommodate other scenarios in the future: Design may be simply modified in the future to accommodate other requirements and scenarios, such as 60Hz powerline interference cancellation, instead of the European 50Hz.

ASN Filter Designer: the fast and intuitive filter designer

The ASN Filter Designer is the ideal tool to analyze and filter the sensor data quickly. When needed, you can easily deploy your data for further analyze for tools such as Matlab and Python. As such it’s ideal for engineers who need and powerful signal analyser and need to create a data filter for their IoT application. Certainly, when you have to create data filtering once in a while. Compared to other tools, you can create an algorithm within hours instead of days.

Easily deploy your algorithms to Matlab, Python, C++ and Arm

A big timesaver of the ASN Filter Designer is that you can easily deploy your algorithms to Matlab, Python, C++ or directly on an Arm microcontroller with the automatic code generators.

Instant pain relief

Just think about the number of hours that you could have saved if you had design tool that managed all of the algorithmic details for you.

ASN Filter Designer is an industry standard solution used by thousands of professional developers worldwide working on IoT projects. Our close collaboration with Arm and ST ensures that the all filters are 100% compatible with all Arm Cortex-M processors.

How much pain relief can 125 Euro buy you?

Because a lot of engineers need our ASN Filter Designer for a short time, a 125 Euro license for just 3 months is possible!

Just ask yourself: is 125 Euro a fair price to pay for instant pain relief and results? We think so. Besides, we have a license for 1 year and even a perpetual license. Download the demo to see for yourself or contact us for more information.

A digital filter is a mathematical algorithm that operates on a digital dataset (e.g. sensor data) in order extract information of interest and remove any unwanted information. Applications of this type of technology, include removing glitches from sensor data or even cleaning up noise on a measured signal for easier data analysis. But how do we choose the best type of digital filter for our application? And what are the differences between an IIR filter and an FIR filter?

Digital filters are divided into the following two categories:

- Infinite impulse response (IIR)

- Finite impulse response (FIR)

As the names suggest, each type of filter is categorised by the length of its impulse response. However, before beginning with a detailed mathematical analysis, it is prudent to appreciate the differences in performance and characteristics of each type of filter.

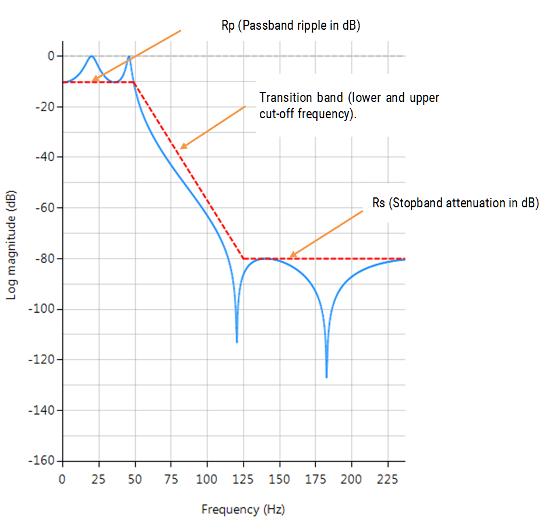

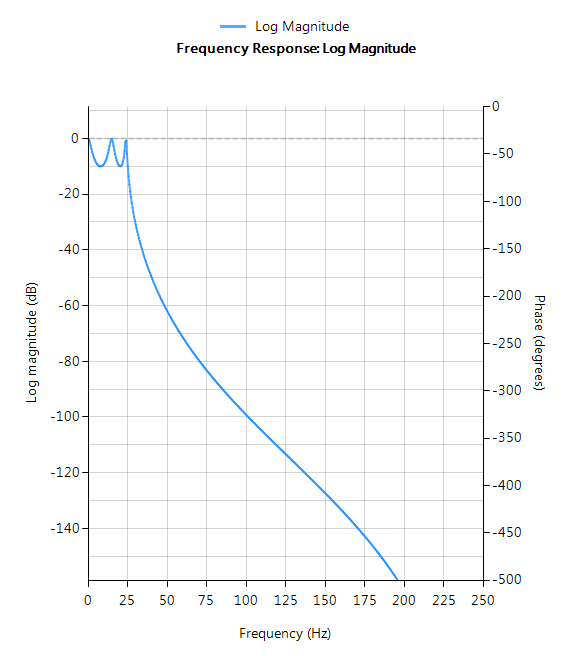

Example

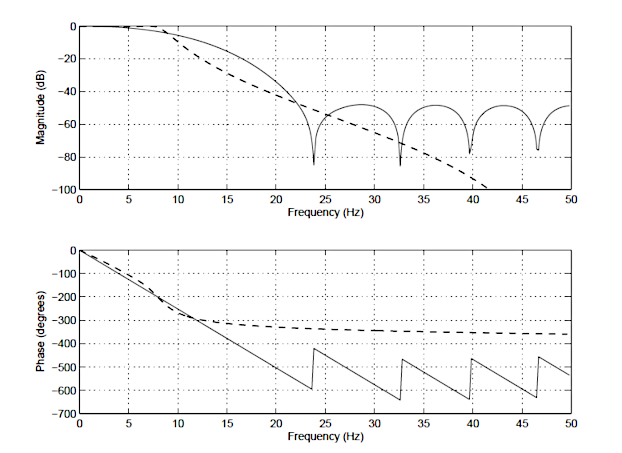

In order to illustrate the differences between an IIR and FIR, the frequency response of a 14th order FIR (solid line), and a 4th order Chebyshev Type I IIR (dashed line) is shown below in Figure 1. Notice that although the magnitude spectra have a similar degree of attenuation, the phase spectrum of the IIR filter is non-linear in the passband (\(\small 0\rightarrow7.5Hz\)), and becomes very non-linear at the cut-off frequency, \(\small f_c=7.5Hz\). Also notice that the FIR requires a higher number of coefficients (15 vs the IIR’s 10) to match the attenuation characteristics of the IIR.

These are just some of the differences between the two types of filters. A detailed summary of the main advantages and disadvantages of each type of filter will now follow.

IIR filters

IIR (infinite impulse response) filters are generally chosen for applications where linear phase is not too important and memory is limited. They have been widely deployed in audio equalisation, biomedical sensor signal processing, IoT/IIoT smart sensors and high-speed telecommunication/RF applications.

Advantages

- Low implementation cost: requires less coefficients and memory than FIR filters in order to satisfy a similar set of specifications, i.e., cut-off frequency and stopband attenuation.

- Low latency: suitable for real-time control and very high-speed RF applications by virtue of the low number of coefficients.

- Analog equivalent: May be used for mimicking the characteristics of analog filters using s-z plane mapping transforms.

Disadvantages

- Non-linear phase characteristics: The phase charactersitics of an IIR filter are generally nonlinear, especially near the cut-off frequencies. All-pass equalisation filters can be used in order to improve the passband phase characteristics.

- More detailed analysis: Requires more scaling and numeric overflow analysis when implemented in fixed point. The Direct form II filter structure is especially sensitive to the effects of quantisation, and requires special care during the design phase.

- Numerical stability: Less numerically stable than their FIR (finite impulse response) counterparts, due to the feedback paths.

FIR filters

FIR (finite impulse response) filters are generally chosen for applications where linear phase is important and a decent amount of memory and computational performance are available. They have a widely deployed in audio and biomedical signal enhancement applications. Their all-zero structure (discussed below) ensures that they never become unstable for any type of input signal, which gives them a distinct advantage over the IIR.

Advantages

- Linear phase: FIRs can be easily designed to have linear phase. This means that no phase distortion is introduced into the signal to be filtered, as all frequencies are shifted in time by the same amount – thus maintaining their relative harmonic relationships (i.e. constant group and phase delay). This is certainly not case with IIR filters, that have a non-linear phase characteristic.

- Stability: As FIRs do not use previous output values to compute their present output, i.e. they have no feedback, they can never become unstable for any type of input signal, which is gives them a distinct advantage over IIR filters.

- Arbitrary frequency response: The Parks-McClellan and ASN FilterScript’s firarb() function allow for the design of an FIR with an arbitrary magnitude response. This means that an FIR can be customised more easily than an IIR.

- Fixed point performance: the effects of quantisation are less severe than that of an IIR.

Disadvantages

- High computational and memory requirement: FIRs usually require many more coefficients for achieving a sharp cut-off than their IIR counterparts. The consequence of this is that they require much more memory and significantly a higher amount of MAC (multiple and accumulate) operations. However, modern microcontroller architectures based on the Arm’s Cortex-M cores now include DSP hardware support via SIMD (signal instruction, multiple data) that expedite the filtering operation significantly.

- Higher latency: the higher number of coefficients, means that in general a linear phase FIR is less suitable than an IIR for fast high throughput applications. This becomes problematic for real-time closed-loop control applications, where a linear phase FIR filter may have too much group delay to achieve loop stability.

- Minimum phase filters: A solution to ovecome the inherent N/2 latency (group delay) in a linear filter is to use a so-called minimum phase filter, whereby any zeros outside of the unit circle are moved to their conjugate reciprocal locations inside the unit circle. The result of the zero flipping operation is that the magnitude spectrum will be identical to the original filter, and the phase will be nonlinear, but most importantly the latency will be reduced from N/2 to something much smaller (although non-constant), making it suitable for real-time control applications.

For applications where phase is less important, this may sound ideal, but the difficulty arises in the numerical accuracy of the root-finding algorithm when dealing with large polynomials. Therefore, orders of 50 or 60 should be considered a maximum when using this approach. Although other methods do exist (e.g. the Complex Cepstrum), transforming higher-order linear phase FIRs to their minimum phase cousins remains a challenging task. - No analog equivalent: using the Bilinear, matched z-transform (s-z mapping), an analog filter can be easily be transformed into an equivalent IIR filter. However, this is not possible for an FIR as it has no analog equivalent.

Mathematical definitions

As discussed in the introduction, the name IIR and FIR originate from the mathematical definitions of each type of filter, i.e. an IIR filter is categorised by its theoretically infinite impulse response,

y(n)=\sum_{k=0}^{\infty}h(k)x(n-k)

\)

and an FIR categorised by its finite impulse response,

y(n)=\sum_{k=0}^{N-1}h(k)x(n-k)

\)

We will now analyse the mathematical properties of each type of filter in turn.

IIR definition

As seen above, an IIR filter is categorised by its theoretically infinite impulse response,

\(\displaystyle y(n)=\sum_{k=0}^{\infty}h(k)x(n-k) \)

Practically speaking, it is not possible to compute the output of an IIR using this equation. Therefore, the equation may be re-written in terms of a finite number of poles \(\small p\) and zeros \(\small q\), as defined by the linear constant coefficient difference equation given by:

y(n)=\sum_{k=0}^{q}b_k x(n-k)-\sum_{k=1}^{p}a_ky(n-k)

\)

where, \(\small a_k\) and \(\small b_k\) are the filter’s denominator and numerator polynomial coefficients, who’s roots are equal to the filter’s poles and zeros respectively. Thus, a relationship between the difference equation and the z-transform (transfer function) may therefore be defined by using the z-transform delay property such that,

\sum_{k=0}^{q}b_kx(n-k)-\sum_{k=1}^{p}a_ky(n-k)\quad\stackrel{\displaystyle\mathcal{Z}}{\longleftrightarrow}\quad\frac{\sum\limits_{k=0}^q b_kz^{-k}}{1+\sum\limits_{k=1}^p a_kz^{-k}}

\)

As seen, the transfer function is a frequency domain representation of the filter. Notice also that the poles act on the output data, and the zeros on the input data. Since the poles act on the output data, and affect stability, it is essential that their radii remain inside the unit circle (i.e. <1) for BIBO (bounded input, bounded output) stability. The radii of the zeros are less critical, as they do not affect filter stability. This is the primary reason why all-zero FIR (finite impulse response) filters are always stable.

BIBO stability

A linear time invariant (LTI) system (such as a digital filter) is said to be bounded input, bounded output stable, or BIBO stable, if every bounded input gives rise to a bounded output, as

\(\displaystyle \sum_{k=0}^{\infty}\left|h(k)\right|<\infty \)

Where, \(\small h(k)\) is the LTI system’s impulse response. Analyzing this equation, it should be clear that the BIBO stability criterion will only be satisfied if the system’s poles lie inside the unit circle, since the system’s ROC (region of convergence) must include the unit circle. Consequently, it is sufficient to say that a bounded input signal will always produce a bounded output signal if all the poles lie inside the unit circle.

The zeros on the other hand, are not constrained by this requirement, and as a consequence may lie anywhere on z-plane, since they do not directly affect system stability. Therefore, a system stability analysis may be undertaken by firstly calculating the roots of the transfer function (i.e., roots of the numerator and denominator polynomials) and then plotting the corresponding poles and zeros upon the z-plane.

An interesting situation arises if any poles lie on the unit circle, since the system is said to be marginally stable, as it is neither stable or unstable. Although marginally stable systems are not BIBO stable, they have been exploited by digital oscillator designers, since their impulse response provides a simple method of generating sine waves, which have proved to be invaluable in the field of telecommunications.

Biquad IIR filters

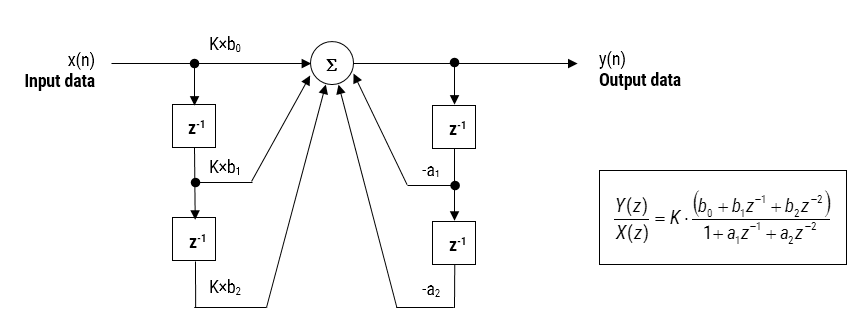

The IIR filter implementation discussed herein is said to be biquad, since it has two poles and two zeros as illustrated below in Figure 2. The biquad implementation is particularly useful for fixed point implementations, as the effects of quantization and numerical stability are minimised. However, the overall success of any biquad implementation is dependent upon the available number precision, which must be sufficient enough in order to ensure that the quantised poles are always inside the unit circle.

Figure 2: Direct Form I (biquad) IIR filter realization and transfer function.

Analysing Figure 2, it can be seen that the biquad structure is actually comprised of two feedback paths (scaled by \(\small a_1\) and \(\small a_2\)), three feed forward paths (scaled by \(\small b_0, b_1\) and \(\small b_2\)) and a section gain, \(\small K\). Thus, the filtering operation of Figure 1 can be summarised by the following simple recursive equation:

\(\displaystyle y(n)=K\times\Big[b_0 x(n) + b_1 x(n-1) + b_2 x(n-2)\Big] – a_1 y(n-1)-a_2 y(n-2)\)

Analysing the equation, notice that the biquad implementation only requires four additions (requiring only one accumulator) and five multiplications, which can be easily accommodated on any Cortex-M microcontroller. The section gain, \(\small K\) may also be pre-multiplied with the forward path coefficients before implementation.

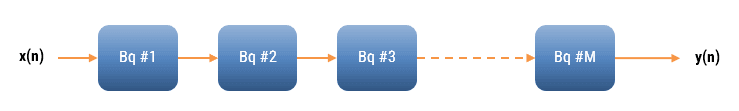

A collection of Biquad filters is referred to as a Biquad Cascade, as illustrated below.

The ASN Filter Designer can design and implement a cascade of up to 50 biquads (Professional edition only).

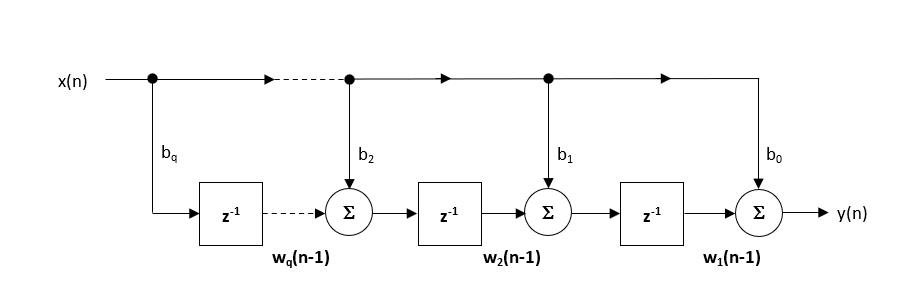

Floating point implementation

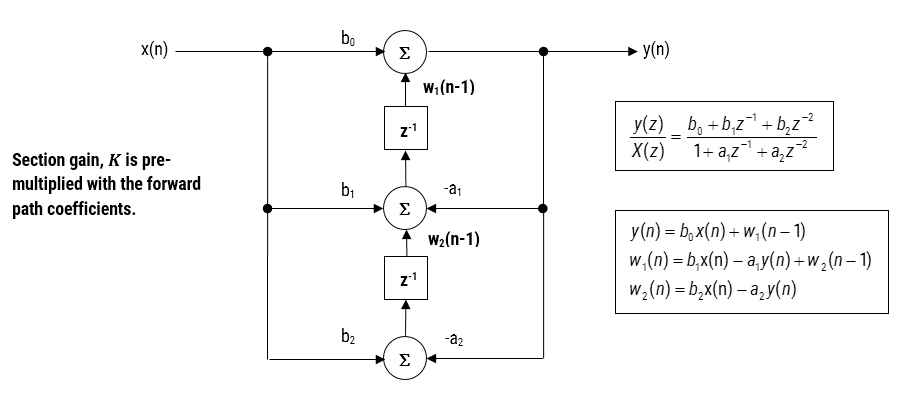

When implementing a filter in floating point (i.e. using double or single precision arithmetic) Direct Form II structures are considered to be a better choice than the Direct Form I structure. The Direct Form II Transposed structure is considered the most numerically accurate for floating point implementation, as the undesirable effects of numerical swamping are minimised as seen by analysing the difference equations.

Figure 3 – Direct Form II Transposed strucutre, transfer function and difference equations

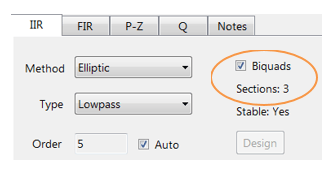

The filter summary (shown in Figure 4) provides the designer with a detailed overview of the designed filter, including a detailed summary of the technical specifications and the filter coefficients, which presents a quick and simple route to documenting your design.

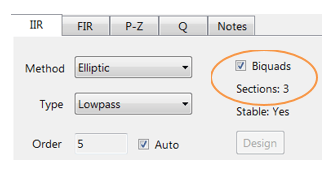

The ASN Filter Designer supports the design and implementation of both single section and Biquad (default setting) IIR filters.

FIR definition

Returning the IIR’s linear constant coefficient difference equation, i.e.

y(n)=\sum_{k=0}^{q}b_kx(n-k)-\sum_{k=1}^{p}a_ky(n-k)

\)

Notice that when we set the \(\small a_k\) coefficients (i.e. the feedback) to zero, the definition reduces to our original the FIR filter definition, meaning that the FIR computation is just based on past and present inputs values, namely:

y(n)=\sum_{k=0}^{q}b_kx(n-k)

\)

Implementation

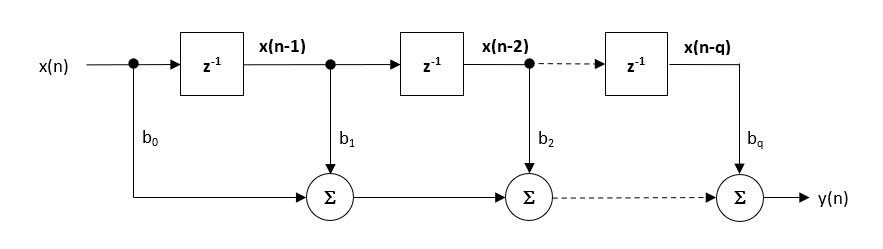

Although several practical implementations for FIRs exist, the direct form structure and its transposed cousin are perhaps the most commonly used, and as such, all designed filter coefficients are intended for implementation in a Direct form structure.

The Direct form structure and associated difference equation are shown below. The Direct Form is advocated for fixed point implementation by virtue of the single accumulator concept.

\(\displaystyle y(n) = b_0x(n) + b_1x(n-1) + b_2x(n-2) + …. +b_qx(n-q) \)

The recommended (default) structure within the ASN Filter Designer is the Direct Form Transposed structure, as this offers superior numerical accuracy when using floating point arithmetic. This can be readily seen by analysing the difference equations below (used for implementation), as the undesirable effects of numerical swamping are minimised, since floating point addition is performed on numbers of similar magnitude.

\(\displaystyle \begin{eqnarray}y(n) & = &b_0x(n) &+& w_1(n-1) \\ w_1(n)&=&b_1x(n) &+& w_2(n-1) \\ w_2(n)&=&b_2x(n) &+& w_3(n-1) \\ \vdots\quad &=& \quad\vdots &+&\quad\vdots \\ w_q(n)&=&b_qx(n) \end{eqnarray}\)

Implementing your filter on an Arm Cortex-M processor

Although a few processor technologies exist for microcontrollers (e.g. RISC-V, Xtensa, MIPS), over 90% of the microcontrollers used in the smart product market are powered by so-called Arm Cortex-M processors that offer a combination of high algorithmic performance, low-power and security. The Arm Cortex-M4 is a very popular choice with several silicon vendors (including ST, TI, NXP, ADI, Nordic, Microchip, Renesas), as it offers DSP (digital signal processing) functionality traditionally found in more expensive devices and is low-power.

Filtering libraries and support

Arm and ASN provide developers with extensive easy-to-use tooling and tried and tested software libraries used internationally by tens of thousands of developers.

The Arm CMSIS-DSP software framework is interesting as it provides IoT developers with a rich collection of fast mathematical and vector functions, interpolation functions, digital filtering (FIR/IIR) and adaptive filtering (LMS) functions, motor control functions (e.g. PID controller), complex math functions and supports various data types, including fixed and floating point. The important point to make here is that all of these functions have been optimised for Arm Cortex-M processors, allowing you to focus on your application rather than worrying about optimisation.

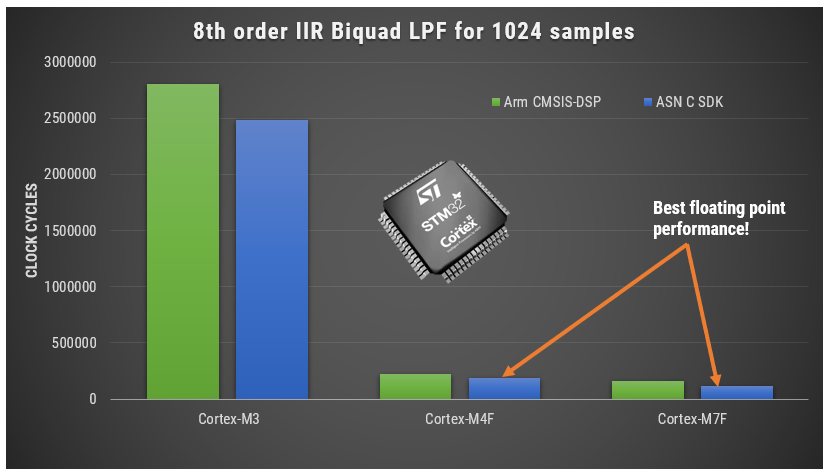

Despite the broad functionality, the CMSIS-DSP library is somewhat limited for filters, so the flexible ASN DSP filtering library can be used instead, which supports the higher numerical accuracy Direct Form Transposed FIR filter structure and single section IIR filters. A benchmark of ASN’s floating point application-specific DSP filtering library versus Arm’s CMSIS-DSP library is shown below for three types of Arm cores.

As seen, the performance of the ASN library is slightly faster by virtue of the application-specific nature of the implementation. The C code is automatically generated from the ASN Filter Designer tool.

What have we learned?

Digital filters are divided into the following two categories:

- Infinite impulse response (IIR)

- Finite impulse response (FIR)

IIR (infinite impulse response) filters are generally chosen for applications where linear phase is not too important and memory is limited. They have been widely deployed in audio equalisation, biomedical sensor signal processing, IoT/AIoT smart sensors and high-speed telecommunication/RF applications.

FIR (finite impulse response) filters are generally chosen for applications where linear phase is important and a decent amount of memory and computational performance are available. They have a widely deployed in audio and biomedical signal enhancement applications.

ASN Filter Designer provides engineers with everything they need to design, experiment and deploy complex IIR and FIR digital filters for a variety of IoT sensor measurement applications. These advantages coupled with automatic C code generation with ASN’s DSP filtering library functionality allow engineers to design, validate and then deploy their designs to an Arm Cortex-M processor within hours rather than more traditional routes that could take days.

Author

-

View all posts

View all postsSanjeev is a RTEI (Real-Time Edge Intelligence) visionary and expert in signals and systems with a track record of successfully developing over 26 commercial products. He is a Distinguished Arm Ambassador and advises top international blue chip companies on their AIoT/RTEI solutions and strategies for I5.0, telemedicine, smart healthcare, smart grids and smart buildings.

6 reasons why ASN Filter Designer is a powerful real-time DSP platform e.g. life math scripting, tool creates your technical specification and documentation

UI experience 2020 pack

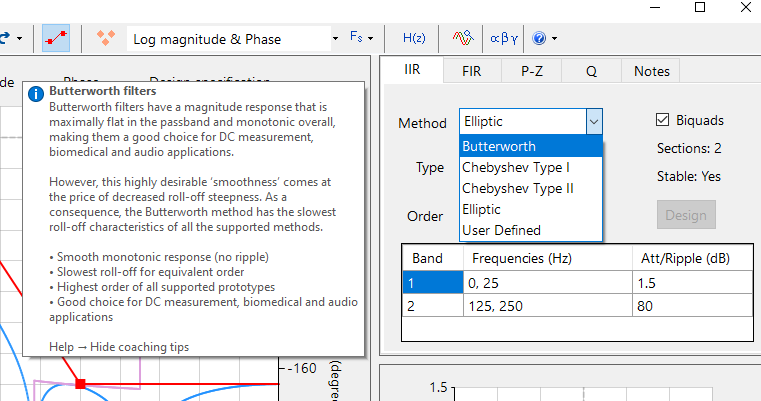

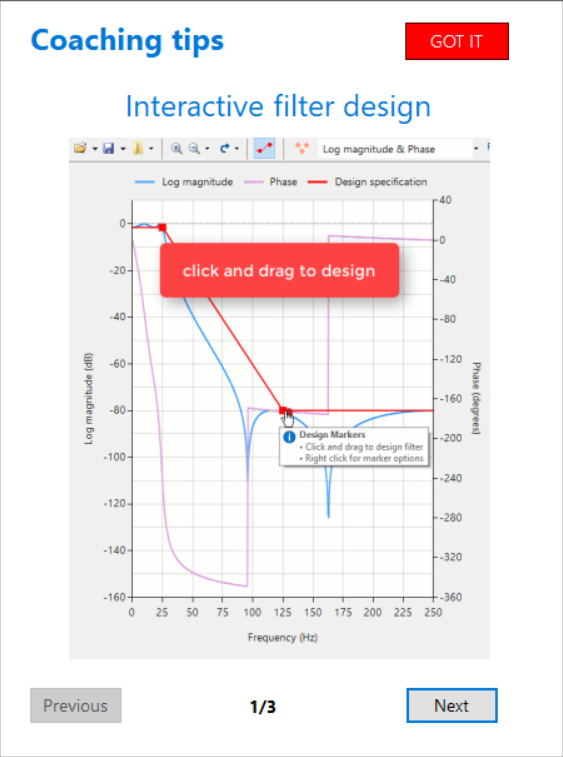

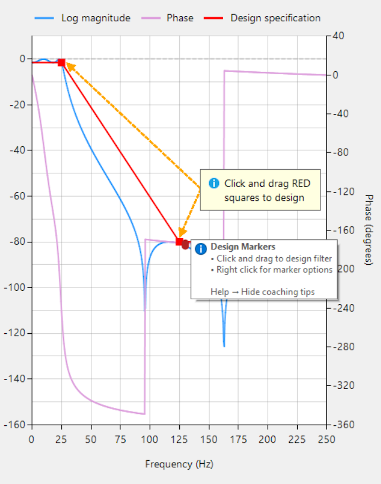

After downloading the ASN Filter Designer, most people just want to play with the tool, in order to get a feeling of whether it works for them. But how do you get started with the ASN Filter Designer? Based on some great user feedback, ASNFD v4.4 now comes with the UI experience 2020 pack. This pack includes detailed coaching tips, an enhanced user experience and step-by-step instructions to get you started with your design.

A quick overview of the ASN Filter Designer v4.4 is given below, and we’re sure that you’ll agree that it’s an awesome tool for DSP IIR/FIR digital filter design!

The ASN Filter Designer has a fast, intuitive user interface. Interactively design, validate and deploy your digital filter within minutes rather than hours. However, getting started with DSP Filter Design can be difficult, especially when you don’t have deep knowledge of digital signal processing. Most people just want to experiment with a tool to get a feeling whether it works for them (sure, there are tutorials and videos). But where do you start?

Start experimenting with filter design immediately

That’s why we have developed the UI Experience 2020 pack. Based on user feedback, we’ve created detailed tooltips and animations of key functionality. Within minutes, you’ll get a kick-start into functionalities such as chart zooming, panning and design markers.

Coaching tips, enhanced user experience, step-by-step instructions

Based on user feedback, the UI Experience 2020 pack includes:

- Extensive coaching tips

- Detailed explanations of design methods and types of filters

- Enhanced user experience:

- cursors

- animations

- visual effects

- Short cuts to detailed worked solutions, tutorials and step-by-step instructions

Feedback from the user community has been very positive indeed! By providing detailed tooltips and animations of key functionality, the initial hurdle of designing a filter with your desired specifications has now been significantly simplified.

So start with the ASN Filter Designer right away, and cut your development costs by up to 75%!

Modern embedded processors, software frameworks and design tooling now allow engineers to apply advanced measurement concepts to smart factories as part of the I4.0 revolution.

In recent years, PM (predictive maintenance) of machines has received great attention, as factories look to maximise their production efficiency while at the same time retaining the invaluable skills of experienced foremen and production workers.

Traditionally, a foreman would walk around the shop floor and listen to the sounds a machine would make to get an idea of impending failure. With the advent of I4.0 AIoT technology, microphones, edge DSP algorithms and ML may now be employed in order to ‘listen’ to the sounds a machine makes and then make a classification and prediction.

One of the major challenges is how to make a computer hear like a human. In this article we will discuss how sound weighting curves can make a computer hear like a human, and how they can be deployed to an Arm Cortex-M microcontroller for use in an AIoT application.

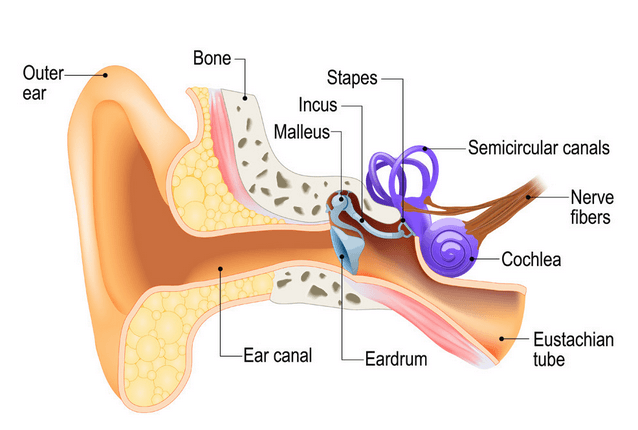

Physics of the human ear

An illustration of the human ear shown below. As seen, the basic task of the ear is to translate sound (air vibration) into electrical nerve impulses for the brain to interpret.

The ear achieves this via three bones (Stapes, Incus and Malleus) that act as a mechanical amplifier for vibrations received at the eardrum. These amplified sounds are then passed onto the Cochlea via the Oval window (not shown).

The Cochlea (shown in purple) is filled with a fluid that moves in response to the vibrations from the oval window. As the fluid moves, thousands of nerve endings are set into motion. These nerve endings transform sound vibrations into electrical impulses that travel along the auditory nerve fibres to the brain for analysis.

Modelling perceived sound

Due to complexity of the fluidic mechanical construction of the human auditory system, low and high frequencies are typically not discernible. Researchers over the years have found that humans are most perceptive to sounds in the 1-6kHz range, although this range varies according to the subject’s physical health.

This research led to the definition of a set of weighting curves: the so-called A, B, C and D weighting curves, which equalises a microphone’s frequency response. These weighting curves aim to bring the digital and physical worlds closer together by allowing a computerised microphone-based system to hear like a human.

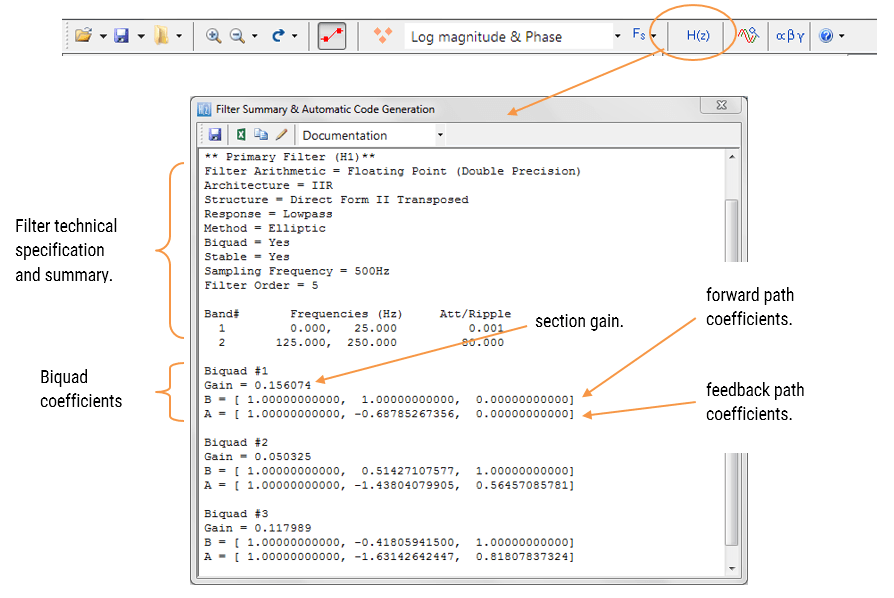

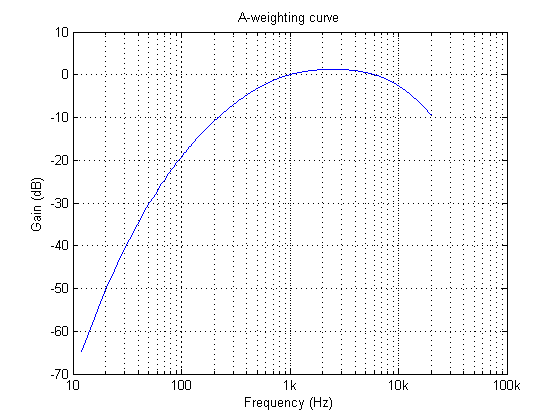

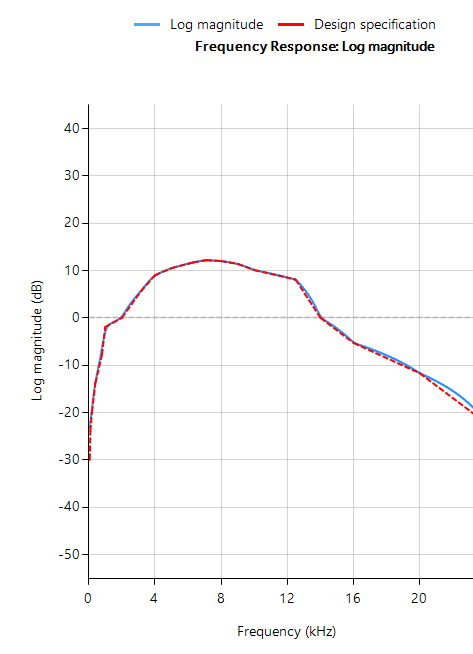

The A-weighing curve is the most widely used as it is mandated by IEC-61672 to be fitted to all sound level meters. The B and D curves are hardly ever used, but C-weighting may be used for testing the impact of noise in telecoms systems.

The frequency response of the A-weighting curve is shown above, where it can be seen that sounds entering our ears are de-emphasised below 500Hz and are most perceptible between 0.5-6kHz. Notice that the curve is unspecified above 20kHz, as this exceeds the human hearing range.

ASN FilterScript

ASN’s FilterScript symbolic math scripting language offers designers the ability to take an analog filter transfer function and transform it to its digital equivalent with just a few lines of code.

The analog transfer functions of the A and C-weighting curves are given below:

\(H_A(s) \approx \displaystyle{7.39705×10^9 \cdot s^4 \over (s + 129.4)^2\quad(s + 676.7)\quad (s + 4636)\quad (s + 76655)^2}\)

\(H_C(s) \approx \displaystyle{5.91797×10^9 \cdot s^2\over(s + 129.4)^2\quad (s + 76655)^2}\)

These analog transfer functions may be transformed into their digital equivalents via the bilinear() function. However, notice that \(H_A(s) \) requires a significant amount of algebracic manipulation in order to extract the denominator cofficients in powers of \(s\).

Convolution

A simple trick to perform polynomial multiplication is to use linear convolution, which is the same algebraic operation as multiplying two polynomials together. This may be easily performed via FilterScript’s conv() function, as follows:

y=conv(a,b);

As a simple example, the multiplication of \((s^2+2s+10)\) with \((s+5)\), would be defined as the following three lines of FilterScript code:

a={1,2,10};

b={1,5};

y=conv(a,b);

which yields, 1 7 20 50 or \((s^3+7s^2+20s+50)\)

For the A-weighting curve Laplace transfer function, the complete FilterScript code is given below:

ClearH1; // clear primary filter from cascade

Main() // main loop

a={1, 129.4};

b={1, 676.7};

c={1, 4636};

d={1, 76655};

aa=conv(a,a); // polynomial multiplication

dd=conv(d,d);

aab=conv(aa,b);

aabc=conv(aab,c);

Na=conv(aabc,dd);

Nb = {0 ,0 , 1 ,0 ,0 , 0, 0}; // define numerator coefficients

G = 7.397e+09; // define gain

Ha = analogtf(Nb, Na, G, "symbolic");

Hd = bilinear(Ha,0, "symbolic");

Num = getnum(Hd);

Den = getden(Hd);

Gain = getgain(Hd)/computegain(Hd,1e3); // set gain to 0dB@1kHz

Frequency response of analog vs digital A-weighting filter for \(f_s=48kHz\). As seen, the digital equivalent magnitude response matches the ideal analog magnitude response very closely until \(6kHz\).

The ITU-R 486–4 weighting curve

Another weighting curve of interest is the ITU-R 486–4 weighting curve, developed by the BBC. Unlike the A-weighting filter, the ITU-R 468–4 curve describes subjective loudness for broadband stimuli. The main disadvantage of the A-weighting curve is that it underestimates the loudness judgement of real-world stimuli particularly in the frequency band from about 1–9 kHz.

Due to the precise definition of the 486–4 weighting curve, there is no analog transfer function available. Instead the standard provides a table of amplitudes and frequencies – see here. This specification may be directly entered into FilterScript’s firarb() function for designing a suitable FIR filter, as shown below:

ClearH1; // clear primary filter from cascade

ShowH2DM;

interface L = {10,400,10,250}; // filter order

Main()

// ITU-R 468 Weighting

A={-29.9,-23.9,-19.8,-13.8,-7.8,-1.9,0,5.6,9,10.5,11.7,12.2,12,11.4,10.1,8.1,0,-5.3,-11.7,-22.2};

F={63,100,200,400,800,1e3,2e3,3.15e3,4e3,5e3,6.3e3,7.1e3,8e3,9e3,1e4,1.25e4,1.4e4,1.6e4,2e4};

A={-30,A}; // specify arb response

F={0,F,fs/2};

Hd=firarb(L,A,F,"blackman","numeric");

Num=getnum(Hd);

Den={1};

Gain=getgain(Hd);

firarb() function for \(f_s=48kHz\)As seen, FilterScript provides the designer with a very powerful symbolic scripting language for designing weighting curve filters. The following discussion now focuses on deployment of the A-weighting filter to an Arm based processor via the tool’s automatic code generator. The concepts and steps demonstrated below are equally valid for FIR filters.

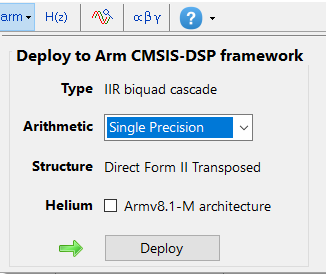

Automatic code generation to Arm processor cores via CMSIS-DSP

The ASN Filter Designer’s automatic code generation engine facilitates the export of a designed filter to Cortex-M Arm based processors via the CMSIS-DSP software framework.

The tool’s built-in analytics and help functions assist the designer in successfully configuring the design for deployment. Professional licence users may expedite the deployment by using the Arm deployment wizard that automates the steps described below.

Steps required for Educational licence users only

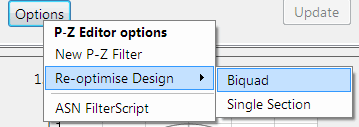

Before generating the code, the H2 filter (i.e. the filter designed in FilterScript) needs to be firstly re-optimised (transformed) to an H1 filter (main filter) structure for deployment. The options menu can be found under the P-Z tab in the main UI.

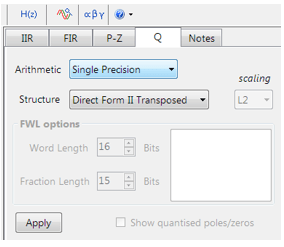

All floating point IIR filters designs must be based on Single Precision arithmetic and either a Direct Form I or Direct Form II Transposed filter structure. The Direct Form II Transposed structure is advocated for floating point implementation by virtue of its higher numerically accuracy.

Quantisation and filter structure settings can be found under the Q tab (as shown on the left). Setting Arithmetic to Single Precision and Structure to Direct Form II Transposed and clicking on the Apply button configures the IIR considered herein for the CMSIS-DSP software framework.

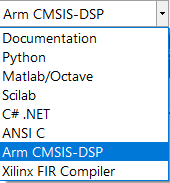

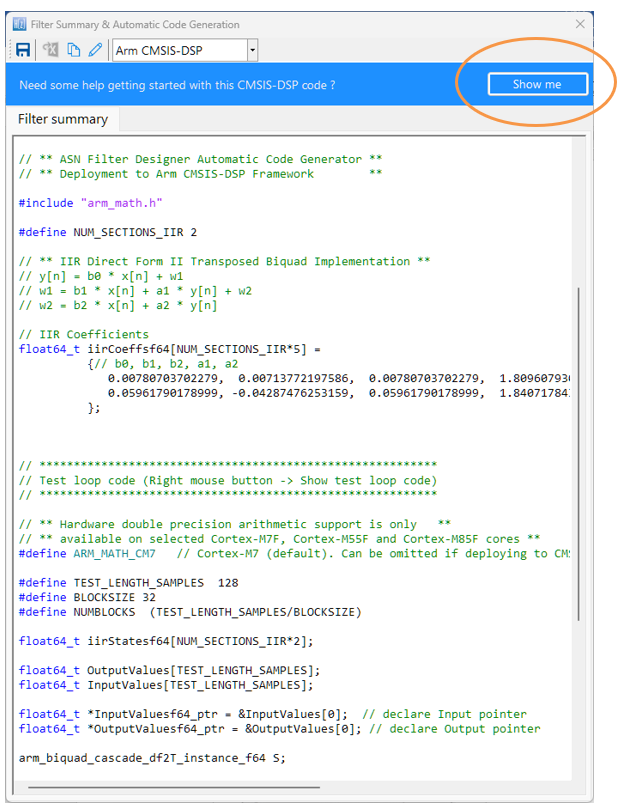

Select the Arm CMSIS-DSP framework from the selection box in the filter summary window:

The automatically generated C code based on the CMSIS-DSP framework for direct implementation on an Arm based Cortex-M processor is shown below:

As seen, the ASN Filter Designer’s automatic code generator generates all initialisation code, scaling and data structures needed to implement the A-weighting filter IIR filter via Arm’s CMSIS-DSP library. A detailed help tutorial is available by clicking on the Show me button.

Author

-

View all posts

View all postsSanjeev is a RTEI (Real-Time Edge Intelligence) visionary and expert in signals and systems with a track record of successfully developing over 26 commercial products. He is a Distinguished Arm Ambassador and advises top international blue chip companies on their AIoT/RTEI solutions and strategies for I5.0, telemedicine, smart healthcare, smart grids and smart buildings.

IIR (infinite impulse response) filters are generally chosen for applications where linear phase is not too important and memory is limited. They have been widely deployed in audio equalisation, biomedical sensor signal processing, IoT/IIoT smart sensors and high-speed telecommunication/RF applications and form a critical building block in algorithmic design.

Advantages

- Low implementation footprint: requires less coefficients and memory than FIR filters in order to satisfy a similar set of specifications, i.e., cut-off frequency and stopband attenuation.

- Low latency: suitable for real-time control and very high-speed RF applications by virtue of the low coefficient footprint.

- May be used for mimicking the characteristics of analog filters using s-z plane mapping transforms.

Disadvantages

- Non-linear phase characteristics.

- Requires more scaling and numeric overflow analysis when implemented in fixed point.

- Less numerically stable than their FIR (finite impulse response) counterparts, due to the feedback paths.

Definition

An IIR filter is categorised by its theoretically infinite impulse response,

Practically speaking, it is not possible to compute the output of an IIR using this equation. Therefore, the equation may be re-written in terms of a finite number of poles \(p\) and zeros \(q\), as defined by the linear constant coefficient difference equation given by:

where, \(a(k)\) and \(b(k)\) are the filter’s denominator and numerator polynomial coefficients, who’s roots are equal to the filter’s poles and zeros respectively. Thus, a relationship between the difference equation and the z-transform (transfer function) may therefore be defined by using the z-transform delay property such that,

As seen, the transfer function is a frequency domain representation of the filter. Notice also that the poles act on the output data, and the zeros on the input data. Since the poles act on the output data, and affect stability, it is essential that their radii remain inside the unit circle (i.e. <1) for BIBO (bounded input, bounded output) stability. The radii of the zeros are less critical, as they do not affect filter stability. This is the primary reason why all-zero FIR (finite impulse response) filters are always stable.

A discussion of IIR filter structures for both fixed point and floating point can be found here.

Classical IIR design methods

A discussion of the most commonly used or classical IIR design methods (Butterworth, Chebyshev and Elliptic) will now follow. For anybody looking for more general examples, please visit the ASN blog for the many articles on the subject.

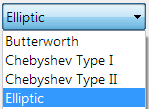

ASN Filter Designer’s graphical designer supports the design of the following four IIR classical design methods:

- Butterworth

- Chebyshev Type I

- Chebyshev Type II

- Elliptic

The algorithm used for the computation first designs an analog filter (via an analog design prototype) with the desired filter specifications specified by the graphical design markers – i.e. pass/stopband ripple and cut-off frequencies. The resulting analog filter is then transformed via the Bilinear z-transform into its discrete equivalent for realisation.

Biquad implementations are advocated for numerical stability.

The Bessel prototype is not supported, as the Bilinear transform warps the linear phase characteristics. However, a Bessel filter design method is available in ASN FilterScript.

As discussed below, each method has its pros and cons, but in general the Elliptic method should be considered as the first choice as it meets the design specifications with the lowest order of any of the methods. However, this desirable property comes at the expense of ripple in both the passband and stopband, and very non-linear passband phase characteristics. Therefore, the Elliptic filter should only be used in applications where memory is limited and passband phase linearity is less important.

The Butterworth and Chebyshev Type II methods have flat passbands (no ripple), making them a good choice for DC and low frequency measurement applications, such as bridge sensors (e.g. loadcells). However, this desirable property comes at the expense of wider transition bands, resulting in low passband to stopband transition (slow roll-off). The Chebyshev Type I and Elliptic methods roll-off faster but have passband ripple and very non-linear passband phase characteristics.

Comparison of classical design methods

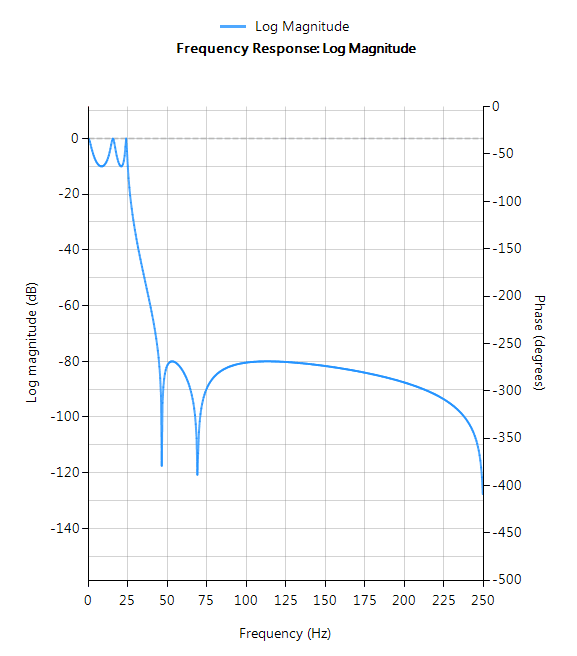

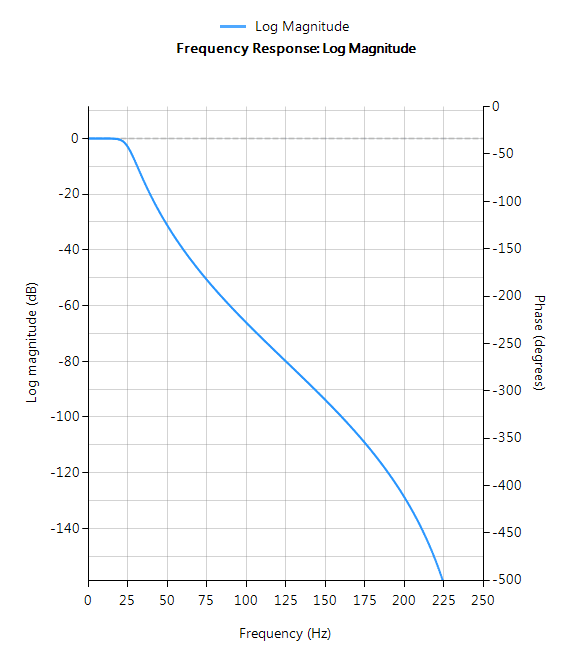

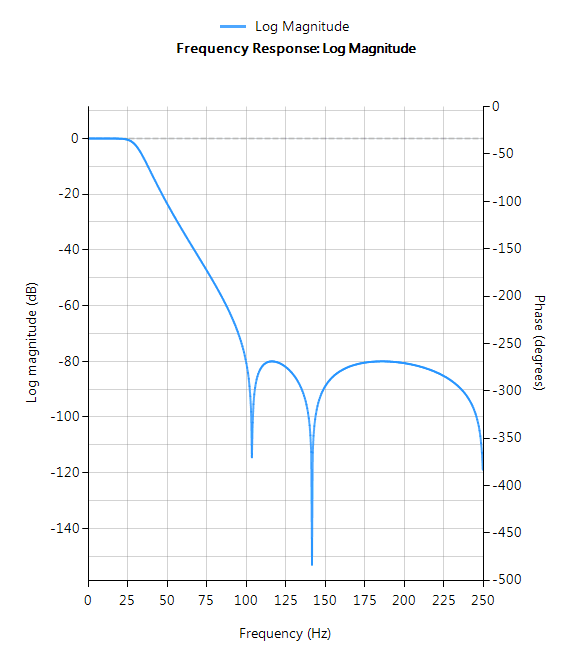

The frequency response charts shown below, show the differences between the various design prototype methods for a 5th order lowpass filter with the same specifications. As seen, the Butterworth response is the slowest to roll-off and the Elliptic the fastest.

Elliptic

Elliptic filters offer steeper roll-off characteristics than Butterworth or Chebyshev filters, but are equiripple in both the passband and the stopband. In general, Elliptic filters meet the design specifications with the lowest order of any of the methods discussed herein.

Filter characteristics

- Fastest roll-off of all supported prototypes

- Equiripple in both the passband and stopband

- Lowest order filter of all supported prototypes

- Non-linear passband phase characteristics

- Good choice for real-time control and high-throughput (RF applications) applications

Butterworth

Butterworth filters have a magnitude response that is maximally flat in the passband and monotonic overall, making them a good choice for DC and low frequency measurement applications, such as loadcells. However, this highly desirable ‘smoothness’ comes at the price of decreased roll-off steepness. As a consequence, the Butterworth method has the slowest roll-off characteristics of all the methods discussed herein.

Filter characteristics

- Smooth monotonic response (no ripple)

- Slowest roll-off for equivalent order

- Highest order of all supported prototypes

- More linear passband phase response than all other methods

- Good choice for DC measurement and audio applications

Chebyshev Type I

Chebyshev Type I filters are equiripple in the passband and monotonic in the stopband. As such, Type I filters roll off faster than Chebyshev Type II and Butterworth filters, but at the expense of greater passband ripple.

Filter characteristics

- Passband ripple

- Maximally flat stopband

- Faster roll-off than Butterworth and Chebyshev Type II

- Good compromise between Elliptic and Butterworth

Chebyshev Type II

Chebyshev Type II filters are monotonic in the passband and equiripple in the stopband making them a good choice for bridge sensor applications. Although filters designed using the Type II method are slower to roll-off than those designed with the Chebyshev Type I method, the roll-off is faster than those designed with the Butterworth method.

Filter characteristics

- Maximally flat passband

- Faster roll-off than Butterworth

- Slower roll-off than Chebyshev Type I

- Good choice for DC measurement applications

Author

-

View all posts

View all postsSanjeev is a RTEI (Real-Time Edge Intelligence) visionary and expert in signals and systems with a track record of successfully developing over 26 commercial products. He is a Distinguished Arm Ambassador and advises top international blue chip companies on their AIoT/RTEI solutions and strategies for I5.0, telemedicine, smart healthcare, smart grids and smart buildings.

Did you know that there are 23 billion IoT embedded devices currently deployed around the world? This figure is expected to grow to a whopping 1 trillion devices by 2050!

Less known, is that 80% of IoT devices are based around Arm’s Cortex-M microcontroller technology. Sometimes clients ask us if we support their Arm Cortex-M based demo-board of choice. The answer is simply: yes!

200+ IC vendors supported

The ASN Filter Designer has an automatic code generator for Arm Cortex-M cores, which means that we support virtually every Arm based demo-board: ST, Cypress, NXP, Analog Devices, TI, Microchip/Atmel and over 200+ other manufacturers. Our compatibility with Arm’s free CMSIS-DSP software framework removes the frustration of implementing complicated digital filters in your IoT application – leaving you with code that is optimal for Cortex-M devices and that works 100% of the time.

The Arm Cortex-M family of microcontrollers are an excellent match for IoT applications. Some of the advantages include:

- Low power and cost – essential for IoT devices

- Microcontroller with DSP functionality all-in-one

- Embedded hardware security functionality

- Cortex-M4 and M7 cores with hardware floating support (enhanced microcontrollers)

- Freely available CMSIS-DSP C library: supporting over 60 signal processing functions

Automatic code generation for Arm’s CMSIS-DSP software framework

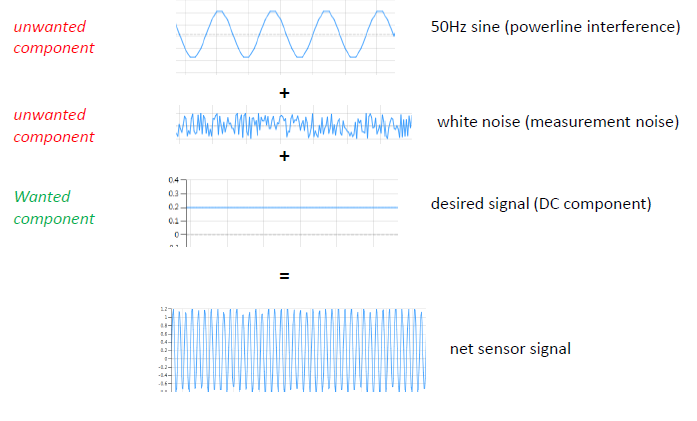

Simply load your sensor data into the ASN Filter Designer signal analyser and perform a detailed analysis. After identifying the wanted and unwanted components of your signal, design a filter and test the performance in real-time on your test data. Export the designed design to Arm MDK, C/C++ or integrate the filter into your algorithm in another domain, such as in Matlab, Python, Scilab or Labview.

Use the tool in your RAD (rapid application development) process, by taking advantage of the automatic code generation to Arm’s CMSIS-DSP software framework, and quickly integrate the DSP filter code into your main application code.

Let the tool analyse your design, and automatically generate fully compliant code for either the M0, M0+, M3, M4 and the newer M23 and M33 Cortex cores. Deploy your design within minutes rather than hours.

Proud Arm knowledge partner

We are proud that we are an Arm knowledge partner! As an Arm DSP knowledge partner, we will be kept informed of their product roadmap and progress for the coming years.

Try it for yourself and see the benefits that the ASN Filter Designer can offer your organisation by cutting your development costs by up to 75%!

How to choose between analog signal processing (ASP) and digital signal processing (DSP). How too chose for ASP or DSP; analog filters or digital filters?

The internet of things (IoT) has gained tremendous popularity over the last few years, as many organisations strive to add IoT smart sensor technologies to their product portfolios. The basic paradigm centres around connecting everything to everything, and exchanging all data. This could be house hold appliances to more blue sky applications, such as smart cities. But what does this particularly mean for you?

Almost all IoT applications involve the use of sensors. But how do SME and even multi-national organisations transform their legacy product offering into a 21st century IoT application? One the first challenges that many organisations face is how to migrate to an IoT application while balancing design time, time to market, budget and risk.

Sounds interesting? Then read further….

We recently completed a project for a client who manufactured their own sensors, but wanted to improve their sensor measurement accuracy from ±10% to better than ±0.5% without going down the road of a massive re-design project.

The question that they asked us was simply: “Is it possible to get high measurement accuracy performance from a signal that is corrupted with all kinds of interference components without a hardware re-design?”

Our answer: “Yes, but the winning recipe centres around knowing what architectural building blocks to use”.

Traditionally, many design bureaus will evaluate the sensor performance and try and improve the measurement accuracy performance by designing new hardware and adding a few standard basic filtering algorithms to the software. This sort of intuitive approach can lead to very high development costs for only a modest increase in sensor performance. For many SMEs these costs can’t be justified, but perhaps there’s a better way?

Algorithms: the winning recipe

Algorithms and mathematics are usually regarded by many organisations as ‘academic black magic’ and are generally overlooked as a solution for a robust IoT commercial application. As a consequence very few organisations actually take the time to analytically analyse a sensor measurement problem, and those who do invent something tend to come up with something that’s only useable in the lab. There has been a trend over the years to turn to Universities or research institutes, but once again the results are generally too academic and are based more on getting journal publications, rather than a robust solution suitable for the market.

Our experience has been that the winning recipe centres around the balance of knowing what architectural blocks to use, and having the experience to assess what components to filter out and what components to enhance. In some cases, this may even involve some minor modifications to the hardware in order to simplify the algorithmic solution. Unfortunately, due to the lack of investment in commercially experienced, academically strong (Masters, PhD) algorithm developers and the pressure of getting a project to the finish line, many solutions (even from reputable multi-national organisations) that we’ve seen over the years only result in a moderate increase in performance.

Despite the plethora of commercially available data analysis software, many organisations opt to do basic data analysis in Microsoft Excel, and tend to stay away from any detailed data analysis as it’s considered an unnecessary academic step that doesn’t really add any value. This missed opportunity generally leads to problems in the future, where products need to be recalled for a ‘round of patchwork’ in order solve the so called ‘unforeseen problems’. A second disadvantage is that performance of the sensors may be only satisfactory, whereas a more detailed look may have yielded clues on how make the sensor performance good or in some cases even excellent.

Algorithms can save the day!

“Although many organisations regard data analysis as a waste of money, our experience and customers prove otherwise.”

Investing in detailed data analysis at the beginning of a project usually results in some good clues as to what needs to be filtered out and what needs to be enhanced in order to achieve the desired performance. In many cases, these valuable clues allow experienced algorithm developers to concoct a combination of signal processing building blocks without re-designing any hardware – which is very desirable for many organisations! Our experience has shown that this fundamental first step can cut project development costs by as much as 75%, while at the same time achieving the desired smart sensor measurement performance demanded by the market.

So what does this all mean in the real world?

Returning the story of our customer, after undertaking a detailed data analysis of their sensor data, our developers were able design a suitable algorithm achieving a ±0.1% measurement accuracy from the original ±10% with only minor modifications to the hardware. This enabled the customer to present his IoT application at a trade show and go into production on time, and yes, we stayed within budget!

Author

-

View all posts

View all postsSanjeev is a RTEI (Real-Time Edge Intelligence) visionary and expert in signals and systems with a track record of successfully developing over 26 commercial products. He is a Distinguished Arm Ambassador and advises top international blue chip companies on their AIoT/RTEI solutions and strategies for I5.0, telemedicine, smart healthcare, smart grids and smart buildings.

Advanced Solutions Nederland B.V.

Lipperkerstraat 146

751DD Enschede

The Netherlands

General enquiries: info@advsolned.com

Technical support: support@advsolned.com

Sales enquiries: sales@advsolned.com

© 2026 Advanced Solutions Nederland B.V.

Privacy Policy | Disclaimer